The market continues to celebrate AI as a virtually limitless technological revolution. Yet behind the stock market records set by American hyperscalers may lie one of the largest infrastructure spending cycles in modern history — with considerable risks to future returns on invested capital.

The debate is no longer merely technological. It is now becoming an energy, industrial, financial, and even macroeconomic issue.

For years, investors have valued tech giants as ultra-scalable software platforms: low marginal costs, high margins, and virtually infinite growth. But generative AI is gradually breaking this model. Large language models require massive amounts of GPUs, electricity, cooling, data centers, and networks. The more sophisticated the models become, the more compute consumption skyrockets.

The major bullish scenario (“bull case”) on which part of the artificial intelligence sector’s valuation now rests is no longer simply the idea that more people will use chatbots like ChatGPT. The truly optimistic scenario advocated by Goldman Sachs in its study published this week is much more ambitious: that of autonomous AI agents.

To understand the difference, we must first imagine what a typical chatbot is today. When a user asks ChatGPT a question, the system generates a response, and then the conversation stops until the next query. The AI thus acts as an on-demand assistant: it answers a question, writes a text, summarizes a document, or translates a sentence.

An AI agent, on the other hand, would operate in a much more autonomous and continuous manner. Instead of simply answering a single question, it could perform entire tasks independently. For example:

- plan a complete trip;

- compare hundreds of options;

- book tickets;

- monitor prices in real time;

- draft and send emails;

- analyze documents;

- correct their own mistakes;

- verify multiple sources;

- use external software;

- or even supervise other specialized agents.

In practice, this means that an AI agent no longer just produces a quick response, but “thinks” for much longer and performs a vast number of internal operations that are invisible to the user.

And this is where the issue becomes extremely important from an economic standpoint.

Every operation performed by an AI consumes “tokens.” A token is simply a small unit of text used by models to process information. The more an AI reads, writes, verifies, reasons, or maintains a long conversation, the more tokens it consumes — and thus the more computing power it uses.

A traditional chatbot uses relatively few resources: a question, an answer.

But an autonomous agent can:

- read the same information multiple times;

- test different lines of reasoning;

- perform checks;

- run searches;

- use external tools;

- maintain massive contexts;

- run continuously in the background.

The result: computing power consumption is skyrocketing.

This is precisely what Goldman Sachs sees as the core of the bullish scenario for AI. The market is no longer betting solely on “more users,” but primarily on “many more computations per user.”

In other words, even if the unit cost of models falls, the total number of computations required could increase much more rapidly. Goldman estimates that global computing capacity needs for AI could be more than 24 times the current capacity by 2030.

This completely changes the economic nature of the sector.

Until now, AI has mostly resembled an extremely costly endeavor:

- construction of giant data centers;

- purchase of thousands of GPUs;

- enormous electricity consumption;

- massive investments in infrastructure.

The problem was that the revenue generated still sometimes seemed insufficient to cover these colossal expenses.

But if AI agents become ubiquitous and constantly consume enormous amounts of computing power, then this infrastructure could be put to much better use. Data centers would operate at full capacity, GPUs would be amortized more efficiently, and margins could begin to improve significantly.

This is what Goldman calls the “inflection point” for margins: the moment when AI ceases to be primarily a cost center and potentially becomes a highly profitable business.

This optimistic scenario immediately runs up against a very real limitation: the physical world.

Because operating AI agents on a massive scale requires:

- huge amounts of electricity;

- thousands of additional GPUs;

- new data centers;

- cooling systems;

- upgraded power grids.

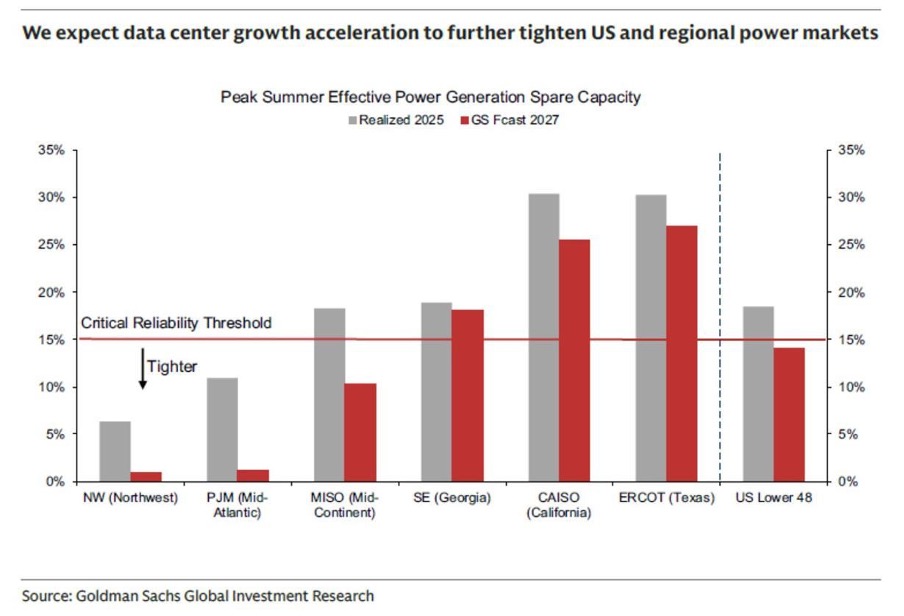

And that’s where the concerns begin. The U.S. power grids are already showing signs of overload in certain regions:

Connection times are skyrocketing, there is a shortage of transformers, local communities sometimes oppose data center projects, and energy costs are rising.

In other words, the scenario in which AI becomes truly profitable is also the one in which it risks pushing the world’s energy infrastructure to its physical limits.

This is the fundamental paradox of the AI cycle: the only scenario in which massive investments actually pay off is also the one that risks pushing the physical system to its limits.

Current projections assume tens of gigawatts of new electrical capacity, thousands of additional GPUs, and a massive acceleration in data center construction. Yet bottlenecks are already beginning to appear everywhere: unavailable transformers, connection waitlists, turbine shortages, copper shortages, water constraints, local opposition, grid saturation, and administrative delays.

Goldman Sachs explicitly acknowledges in its research that the longer data center construction timelines become, the lower the probability that these projects will actually become operational. In other words, a portion of the future revenue currently factored into the market valuations of tech giants may be based on projects that are not yet actually operational — and which, in some cases, may never even see the light of day within the announced timelines.

This is an extremely important point, but one that is often misunderstood by non-specialist investors.

Today, hyperscalers like Microsoft, Amazon, Google, and Meta talk a lot about their backlog — that is, future revenue commitments already signed with customers or anticipated through infrastructure projects.

In theory, this reassures the markets: the more the backlog grows, the more investors view future growth as “visible” and relatively secure.

The problem is that a backlog does not necessarily mean that actual capacity already exists.

In the case of AI, a large portion of this future revenue still depends on infrastructure that must:

- be built;

- obtain the necessary permits;

- be connected to the power grid;

- receive a sufficient supply of GPUs;

- have access to water and cooling systems;

- and actually operate at full capacity.

However, today, many of the announced data center projects are still in the:

- land acquisition stage;

- administrative review stage;

- permit approval stage;

- or unconfirmed electrical capacity.

In other words, the market sometimes values future revenues as if they were already virtually guaranteed, even though the necessary physical infrastructure does not yet exist.

Let’s take a simple example.

Imagine that a company announces the construction of a massive factory capable of generating billions of euros in revenue within three years. Investors might immediately begin to factor this future revenue into their valuations.

But what if, in the meantime:

- environmental permits are delayed;

- costs are skyrocketing;

- there is a power shortage;

- equipment is not being delivered;

- or if financing becomes more difficult, then this plant could be:

- delayed;

- scaled back;

- or sometimes abandoned.

This is exactly the risk that Goldman Sachs is beginning to highlight regarding AI data centers.

The market is currently acting as if “everything that is announced will be delivered.”

But the history of large-scale infrastructure projects shows that this is almost never the case.

The further out a project is in the future, the greater the risks become:

- cost inflation;

- energy constraints;

- political opposition;

- financing difficulties;

- technological changes;

- or declining profitability.

And in the case of AI, these risks are particularly high because the physical requirements are enormous.

A large, modern AI data center requires more than just computers. It also needs:

- high-voltage power lines;

- transformers;

- turbines;

- cooling systems;

- sometimes as much electricity as an entire city.

The problem is that Western power grids were not designed to handle such a rapid surge in demand.

This is why Goldman Sachs explains that the further out a data center’s delivery timeline is, the lower the actual probability that it will become operational.

In other words, part of the pipeline currently presented to investors may be more of a theoretical ambition than a guaranteed capacity.

And this drastically changes how we interpret current valuations.

Because markets today are pricing in:

- future revenues;

- expected margins;

- GPU requirements;

- and even energy demands,

- as if all this infrastructure were going to operate perfectly and on schedule.

But if:

- construction falls behind schedule;

- electricity connections become impossible;

- energy costs are skyrocketing;

- or technologies are evolving faster than expected,

- then certain projects could generate far less revenue than the market currently anticipates.

The risk, therefore, is not necessarily that AI will “fail.”

The real risk could be that markets have already priced in a future infrastructure that does not yet actually exist in the physical world.

This issue becomes even more concerning when we look at the very structure of the financing behind the AI boom. The hyperscalers themselves seem reluctant to shoulder these massive investments alone on their balance sheets. Private credit structures and SPVs are multiplying in order to externalize part of the financial risk. Meta, for example, recently structured several data center financing vehicles with Blue Owl, gradually transforming AI infrastructure into a true structured credit product.

The U.S. investment-grade bond market is also becoming increasingly dependent on AI build-out. Hyperscalers and data center financing structures now account for a massive share of new bond issuances weighted by duration. The problem is clear: creditors are financing an extremely capital-intensive sector with a capped upside, while shareholders capture the bulk of potential gains if AI succeeds.

But the most underestimated risk today could paradoxically come from… technological progress itself.

New AI architectures aim to make models much more efficient by avoiding unnecessary calculations.

Today, models like ChatGPT analyze a vast number of relationships between words in a text, even when many of these links aren’t really useful. This requires enormous computing power.

In contrast, so-called “sparse attention” systems attempt to process only the information that is truly important, much like a human who focuses solely on the key passages in a book.

If this technology works at scale, it could significantly reduce the demand for GPUs, electricity, and data centers required for AI.

If these approaches work at scale, they could disrupt the current cycle of chronic GPU and electricity shortages.

In other words, the market may be building a massive amount of infrastructure based on the assumption that computing needs will continue to explode linearly. Yet the history of technology often shows the opposite: software efficiency gains eventually drastically reduce anticipated hardware needs.

The parallel with telecoms in the late 1990s is becoming increasingly striking. The internet revolution was real. The demand was real. But profitability projections and infrastructure investments turned out to be largely excessive. The result was massive capital destruction despite long-term technological success.

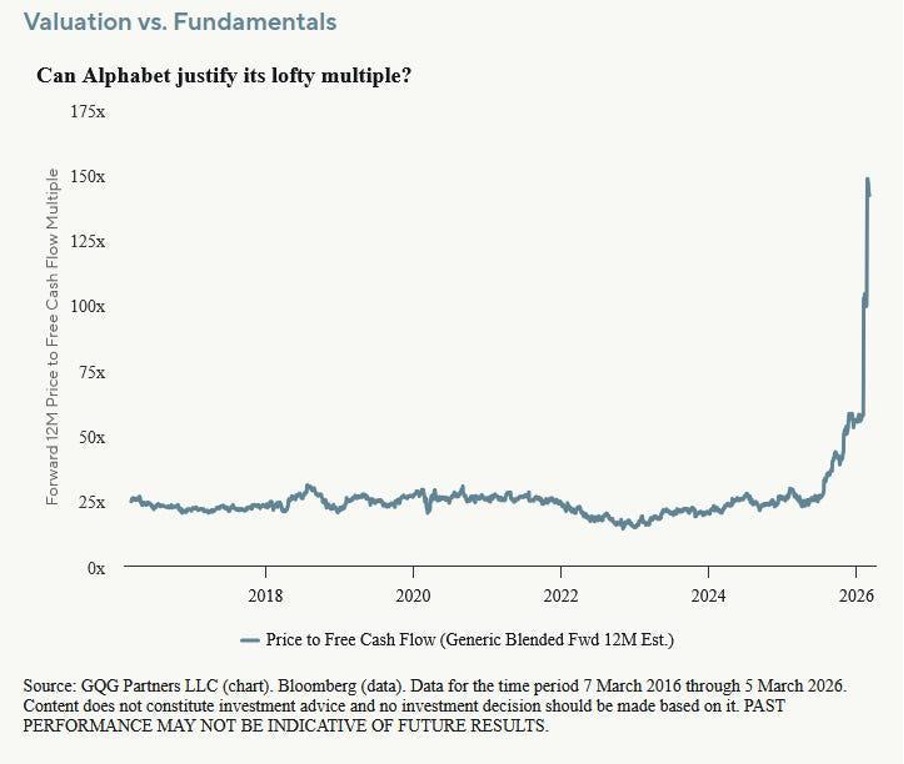

The example of Alphabet perfectly illustrates this growing risk of a disconnect between valuation and economic reality.

Alphabet is now trading at around 133 times its free cash flow, compared to about 20 times before COVID.

However, its free cash flow has barely grown since 2021. In fact, several major institutional investors are beginning to openly express concern about this trend.

First problem: AI poses a direct threat to Google’s traditional revenue model. An increasing proportion of searches now end without an ad click. And without a click, there is no ad impression — and therefore no associated revenue.

Second problem: the explosion in capital expenditures. Google projects between $175 billion and $185 billion in capital expenditures by 2026, even though Google Cloud generated only about $59 billion in revenue in 2025. The system’s economics are beginning to resemble those of an energy-intensive utility more than those of a high-margin software publisher.

Third problem: advertising cyclicality. When the economy slows down, advertising budgets are historically among the first to be cut. In 2022, Alphabet had already lost nearly 40% amid a slowdown in the advertising market.

Alphabet remains, of course, an exceptional company. But at these valuation levels, the market leaves virtually no margin for error, even as risks are simultaneously mounting: soaring capex, pressure on margins, energy dependence, AI competition, refinancing needs, and uncertainty about the true future returns on invested capital.

In this context, gold is gradually regaining its traditional role as a safe-haven asset in the face of excessive valuations, debt cycles, and major financial imbalances. If the AI boom continues to push the system toward greater leverage, energy dependence, and credit fragility, precious metals could once again become one of the few assets capable of protecting investors against a potential reversal in the technology cycle and the credit market.

Reproduction, in whole or in part, is authorized as long as it includes all the text hyperlinks and a link back to the original source.

The information contained in this article is for information purposes only and does not constitute investment advice or a recommendation to buy or sell.